Spark RDD flatMap()

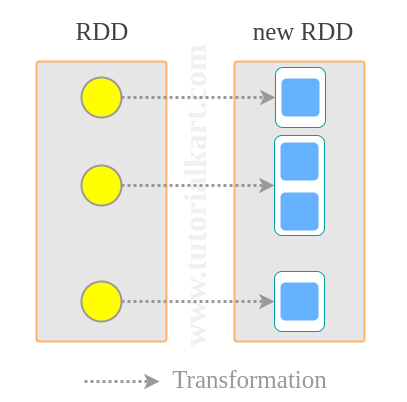

In this Spark Tutorial, we shall learn to flatMap one RDD to another. Flat-Mapping is transforming each RDD element using a function that could return multiple elements to new RDD. Simple example would be applying a flatMap to Strings and using split function to return words to new RDD.

Syntax

RDD.flatMap(<function>)

where<function> is the transformation function that could return multiple elements to new RDD for each of the element of source RDD.

Java Example – Spark RDD flatMap

In this example, we will use flatMap() to convert a list of strings into a list of words. In this case, flatMap() kind of converts a list of sentences to a list of words.

RDDflatMapExample.java

import java.util.Arrays;

import org.apache.spark.SparkConf;

import org.apache.spark.api.java.JavaRDD;

import org.apache.spark.api.java.JavaSparkContext;

public class RDDflatMapExample {

public static void main(String[] args) {

// configure spark

SparkConf sparkConf = new SparkConf().setAppName("Read Text to RDD")

.setMaster("local[2]").set("spark.executor.memory","2g");

// start a spark context

JavaSparkContext sc = new JavaSparkContext(sparkConf);

// provide path to input text file

String path = "data/rdd/input/sample.txt";

// read text file to RDD

JavaRDD<String> lines = sc.textFile(path);

// flatMap each line to words in the line

JavaRDD<String> words = lines.flatMap(s -> Arrays.asList(s.split(" ")).iterator());

// collect RDD for printing

for(String word:words.collect()){

System.out.println(word);

}

}

} Following is the input file we used in the above Java application.

sample.text

Welcome to TutorialKart Learn Apache Spark Learn to work with RDD

Run this Spark Java application, and you will get following output in the console.

Output

17/11/29 12:33:59 INFO DAGScheduler: ResultStage 0 (collect at RDDflatMapExample.java:26) finished in 0.513 s 17/11/29 12:33:59 INFO DAGScheduler: Job 0 finished: collect at RDDflatMapExample.java:26, took 0.793858 s Welcome to TutorialKart Learn Apache Spark Learn to work with RDD 17/11/29 12:33:59 INFO SparkContext: Invoking stop() from shutdown hook

Python Example – Spark RDD.flatMap()

We shall implement the same use case as in the previous example, but as a Python application.

spark-rdd-flatmap-example.py

import sys

from pyspark import SparkContext, SparkConf

if __name__ == "__main__":

# create Spark context with Spark configuration

conf = SparkConf().setAppName("Read Text to RDD - Python")

sc = SparkContext(conf=conf)

# read input text file to RDD

lines = sc.textFile("/home/tutorialkart/workspace/spark/sample.txt")

# flatMap each line to words

words = lines.flatMap(lambda line: line.split(" "))

# collect the RDD to a list

llist = words.collect()

# print the list

for line in llist:

print line Run the following command in your console, from the location of your python file.

$ spark-submit spark-rdd-flatmap-example.py

Spark will submit this python application for running.

17/11/29 15:15:30 INFO DAGScheduler: ResultStage 0 (collect at /home/tutorialkart/workspace/spark/spark-rdd-flatmap-example.py:18) finished in 1.127 s 17/11/29 15:15:30 INFO DAGScheduler: Job 0 finished: collect at /home/tutorialkart/workspace/spark/spark-rdd-flatmap-example.py:18, took 1.299076 s Welcome to TutorialKart Learn Apache Spark Learn to work with RDD 17/11/29 15:15:30 INFO SparkContext: Invoking stop() from shutdown hook

Conclusion

In this Spark Tutorial, we learned the syntax and examples for RDD.flatMap() method.